What the NBA’s three-point revolution teaches us about AI adoption

Surface Area Strategy: Why the Best Teams Are Taking More Shots, Not Better Ones

Happy New Year!

If you’re anything like us, you’re thinking about what to do differently in 2026. New goals. New habits. New experiments.

This article is about that last one.

Because the biggest shift we’ve seen in AI adoption isn’t about which tools to use. It’s not about which workflows to automate.

It’s about how many shots you’re willing to take.

Most people are playing the wrong game. Here’s what we mean.

Game 7. Eastern Conference Finals. TD Garden. 17,000 fans.

The Miami Heat had just blown a 3-0 series lead against Boston. They’d gone up by shooting the lights out from three (48% in those first three games). Then, variance showed up. Boston ripped off three straight wins, while Miami’s three-point percentage bottomed out to 29%.

The “live by the three, die by the three” crowd was having their moment.

So what did Miami do in the deciding game?

They shot 28 threes.

Not fewer threes. Not “safer” threes. They launched the same shots that had just cost them three straight games.

Made 14. Shot 50% from deep while Boston shot 21%. Won 103-84.

Here’s what most people miss about “live by the three, die by the three”: the variance penalty exists, but it’s not the main determinant of outcomes.

In the 2024 playoffs, teams with higher three-point shooting percentages than their opponents went 49-13. That’s a 79% win rate.

The math says: take the shots.

The teams that win aren’t avoiding high-variance strategies. They’re building systems that generate enough good looks that variance doesn’t decide their season.

When variance swung against Miami, they didn’t abandon the strategy. They trusted the system and kept shooting.

The NBA’s three-point revolution didn’t happen because players got better at shooting.

It happened because teams finally did the math.

The Corporate Version of “Live by the Three”

This maps uncomfortably well onto how most organizations treat AI.

They’re not avoiding AI because it can’t work. They’re avoiding it because “too many experiments” sounds like chaos.

So they do the “responsible” thing.

One pilot. One committee. One perfect use case. One big bet.

This feels safe. It feels professional. It feels like how serious organizations operate.

It’s also exactly how you end up taking too few shots.

The companies moving fastest with AI aren’t the ones with the best single idea. They’re the ones with the best loop: generate options, test quickly, kill fast, double down on winners.

In an era where attempts are cheap, the real risk isn’t volatility.

The real risk is being attempt-poor.

The Shift Nobody Wants to Admit

AI didn’t just make work faster.

It made attempts cheaper.

Read that again.

For your entire career, you’ve been trained for a world where experiments are expensive. Where trying things costs time, money, reputation. Where the rational move is to plan more, debate more, protect yourself.

That world is gone.

Here’s what that looks like in practice:

A designer who used to spend a full day on one landing page concept can now generate ten variations in an hour. A copywriter testing headlines used to write five options and pick a favorite; now you can generate fifty, filter to the best ten, and actually A/B test them. A product prototype that took two weeks to build can be scaffolded in a day. Customer research that required scheduling ten interviews can be supplemented with AI-analyzed feedback patterns in minutes.

The cost of a single attempt didn’t drop 10%. It dropped 90%.

When attempts are expensive, the rational strategy is: plan more, coordinate more, debate more, protect reputation.

When attempts are cheap, the rational strategy flips: generate options, run parallel bets, learn quickly, kill fast.

Most people are still playing the old game. That’s why they’re losing.

NFX put it perfectly in their recent piece on the next mental state for founders: “AI has made risk-taking rational at a scale we’ve never seen before.”

Think about the math for a second.

If any single experiment has a 5% chance of revealing something meaningful, running ten experiments gets you close to a 40% chance of finding something worthwhile. Run 100 experiments and your odds approach 99%.

The implication is wild: it’s now rational to test the strange, ambitious, or non-obvious.

In fact, the real risk in this new environment is failing to explore widely enough. Because the upside now gathers in places that were previously too expensive or time-consuming to reach.

Jeff Bezos articulated this better than anyone. In his 2015 shareholder letter, he made a point that changed how we think about experimentation:

“We all know that if you swing for the fences, you’re going to strike out a lot, but you’re also going to hit some home runs. The difference between baseball and business, however, is that baseball has a truncated outcome distribution. When you swing, no matter how well you connect with the ball, the most runs you can get is four. In business, every once in a while, when you step up to the plate, you can score 1,000 runs.”

In baseball, your upside is capped at four runs per swing. In business, one experiment can return 1,000x.

That asymmetry changes everything.

And AI just made each swing dramatically cheaper.

Bezos built Amazon into one of the most valuable companies in history by treating invention as a numbers game. He talks about generating 100 unusual ideas, knowing 99 will die under scrutiny and one might be a breakthrough.

That’s the opposite of Perfect-Shot Culture.

The winners are not the teams with the best single idea.

The winners are the teams with the best loop.

Proof That Cheaper Experimentation Changes Outcomes

This is where the argument stops being philosophical and becomes operational.

When you give organizations a cheaper way to test ideas, their outcomes change.

A large body of work on online experimentation (summarized in “The Surprising Power of Online Experiments“ in Harvard Business Review) finds that firms embracing A/B testing improve across multiple dimensions: they fail faster when young and scale faster when they find traction.

Here’s a story from that HBR article that illustrates the point perfectly.

In 2012, an employee at Microsoft’s Bing proposed changing how ad headlines were displayed on search results. It was a small tweak, nothing flashy. Program managers deemed it low priority, and it sat on the backlog for more than six months.

Then one engineer decided to just test it. Within hours, the variation was generating abnormally high revenue and triggered an internal “too good to be true” alert, which usually signals a bug.

After investigation, the team confirmed the result: a 12% increase in revenue. Worth over $100 million annually in the U.S. alone. Without hurting user experience.

That “low-priority” headline change became the best revenue-generating idea in Bing’s history.

No one could have reliably picked it as the winner in advance. Experimentation surfaced it.

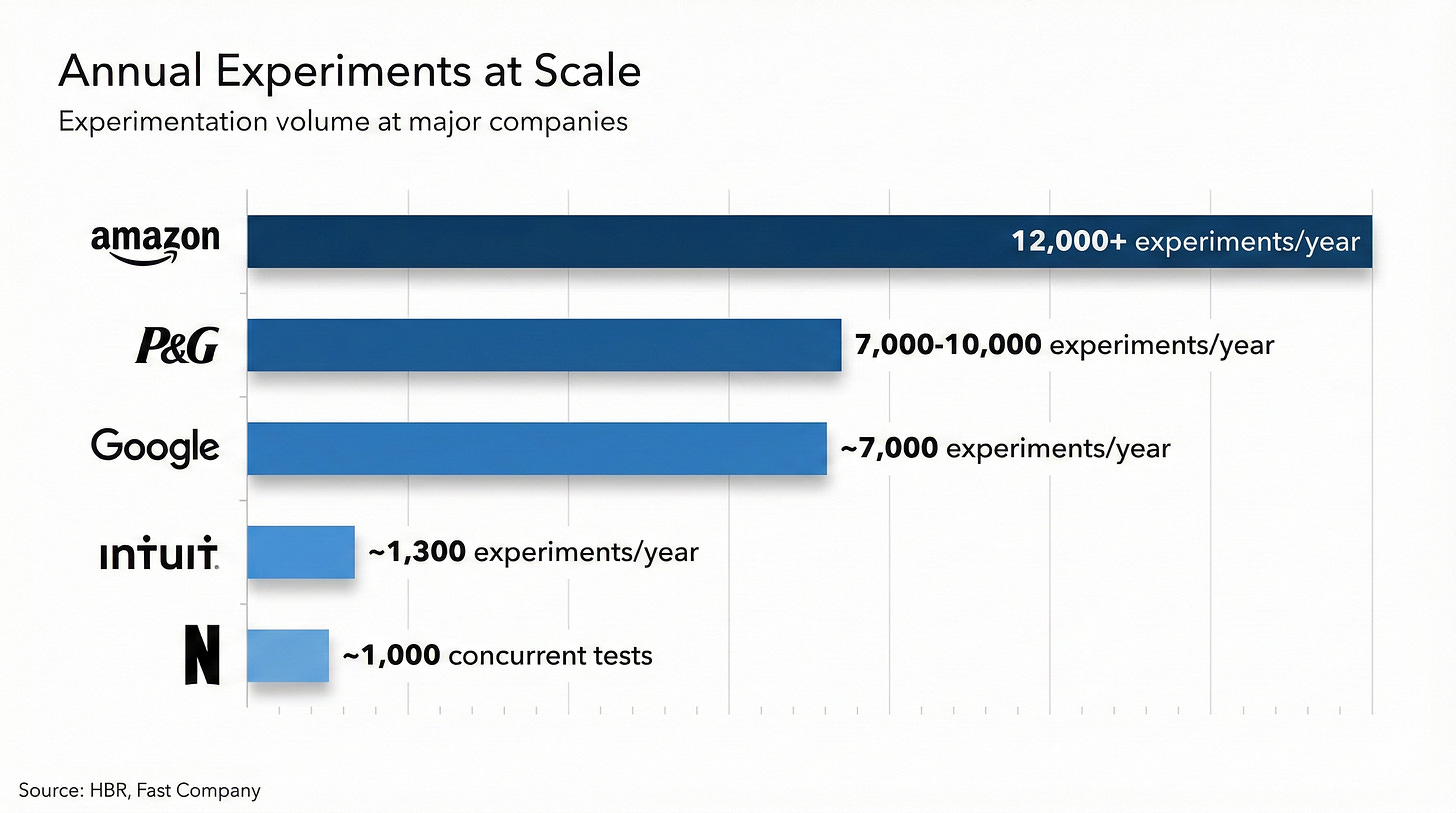

Zoom out and the pattern holds. As Fast Company reported in “Why These Tech Companies Keep Running Thousands Of Failed Experiments,” the most innovative companies treat experimentation volume as a core capability:

Intuit runs roughly 1,300 experiments per year. Procter & Gamble runs 7,000-10,000. Google runs about 7,000. Amazon ramped from hundreds to over 12,000 annually. Netflix often has ~1,000 concurrent tests running.

That’s surface area in the real world.

Lots of shots. Running continuously. With an operating system underneath.

Surface Area Strategy: The Model

Let’s name it.

Surface Area Strategy is maximizing the number of credible shots at value you can take per unit time, while protecting quality through explicit rules.

If you’ve been using AI well, you’ve probably felt this already.

At some point you stop asking “How do I do this task faster?” and start asking “How many ways can I take a shot at this outcome?”

Not to spray randomness. To create options.

Because in high-iteration systems, quality is rarely the result of one perfect attempt.

Quality is the output of selection.

This is the deeper truth most people miss: AI ROI isn’t savings. It’s optionality.

We’re not just writing about this—we’re living it. Over the past few months, we’ve been running our own Surface Area experiments across content, community, and tools. Some have already shown signal. Others we’ve killed. A few are about to ship. More on that soon.

Why This Has Always Been True (But Now It’s Obvious)

There’s an old tension in organizational learning that James March made famous in his 1991 paper: exploration versus exploitation.

Exploitation is sharpening the spear. Efficiency. Refinement. Optimization of what you already know works.

Exploration is putting more lines in the water. Search. Variation. Experimentation with what might work.

Every organization has to balance both. But the optimal balance depends on the cost of exploration.

When exploration is expensive, you lean toward exploitation. When exploration becomes cheap, the rational move is to explore more.

Entertainment has quietly been living this for decades.

On The Knowledge Project, Netflix founder Reed Hastings explains how decisions about shows move through stages: from a sketch to a script, then script plus cast, then dailies, then a fully edited show, then test screenings. At each stage you get more information and become more likely to be right, but judgment never disappears.

When Hastings talks about Squid Game, his point is striking: even after 20 years of doing this, the show’s global breakout surprised them. The lesson isn’t that Netflix can predict perfectly. It’s that they’ve built a system to learn which things are likely to get popular over time. (Episode page)

AI has shifted the cost curve of exploration in the same way streaming shifted the cost curve of piloting shows.

If you keep operating like exploration is expensive, you’re playing the wrong game.

The $1.75 Billion Cautionary Tale

Now for the counterexample.

What happens when you bet everything on one perfect shot?

In April 2020, Quibi launched.

On paper, it looked unstoppable. Co-founded by Jeffrey Katzenberg (former Disney chairman, DreamWorks co-founder) and Meg Whitman (former CEO of eBay and HP). They raised $1.75 billion before launch. Content deals with A-list Hollywood talent. Hundreds of millions on marketing.

They had one thesis: people want premium short-form video content on their phones.

And they were going to execute it perfectly.

Six months later, Quibi was dead.

What went wrong?

By staking everything on one launch, Quibi never gave itself room to iterate. They built the entire business around assumptions they hadn’t validated. When the market didn’t respond, there was no mechanism to adjust.

As Babson’s analysis noted, the leadership ran operations like a traditional corporation rather than a learning machine.

Here’s the contrast that makes this painful.

While Quibi was perfecting its one big launch, TikTok was running thousands of small experiments on content formats, algorithms, and user engagement. They didn’t bet on one perfect thesis. They built a system that tested constantly and doubled down on what worked.

The results speak for themselves. Quibi burned through $1.75 billion and shut down in six months. TikTok now has 1.6 billion monthly active users, generated $23 billion in revenue in 2024, and was the most downloaded app in the world.

The lesson isn’t that Quibi had bad people or bad intentions. Katzenberg and Whitman are legitimately accomplished.

The lesson is this: Perfect-Shot Culture, no matter how well-resourced, loses to Surface Area Strategy when the environment rewards learning speed.

Quibi spent $1.75 billion on one swing. TikTok took thousands of small swings and built a global phenomenon.

The Trap: Surface Area Without Governance Becomes Noise

Here’s the part most “run more experiments” pieces skip.

When you increase attempts, you don’t just increase your chances of finding a win. You also increase your chances of convincing yourself something is a win when it isn’t.

If you take enough shots, a few will go in by luck.

And once your team wants a bet to be true, it becomes easy to move goalposts, cherry-pick flattering metrics, or stop early when initial numbers look promising.

Netflix hit this problem at scale. Running thousands of experiments requires consistent decision rules for what to ship, not endless debate about each result. Their experimentation platform uses pre-registered hypotheses, standardized metrics, and automated statistical analysis to prevent teams from gaming results. (Netflix TechBlog explainer)

The enemy isn’t experimentation.

The enemy is experimenting without rules.

Surface Area Strategy is not “go faster and hope.”

It’s go wider with guardrails.

Experimentation Hygiene: The Rules That Make Surface Area Safe

Compliance, security, and brand risk are real constraints. Surface Area Strategy is not permission to be reckless. It’s a way to be bolder without being sloppy.

Rule 1: Pre-register what success means. Before you run the bet, define what counts as a win. Not twenty metrics. One or two. Write them down before you see results.

Rule 2: Pre-commit kill criteria. Define what “this is not working” looks like in advance. A reply rate below 1% after 50 sends. Zero conversions after 100 impressions. Whatever the threshold is, set it before you fall in love with the idea.

Rule 3: Assume some wins are fake. If you run 30 bets, a few will “win” by luck. Your job is to filter, not celebrate prematurely. Replication is your friend.

Rule 4: Separate explore vs. exploit lanes. You can’t run 1,000 experiments on core production systems. But you can run 1,000 around messaging, prototypes, internal tooling, and research. Think of it like basketball: you can’t take a heat-check three every possession, but you can absolutely structure an offense that creates more good looks.

Rule 5: Know when to flip from explore to exploit. Surface Area Strategy isn't "explore forever." You shift from exploration to exploitation when: you've found a winner worth scaling, the market window is closing, or you've gathered enough signal to make a confident bet. The point isn't perpetual experimentation—it's earning the right to go big on something through rapid learning.

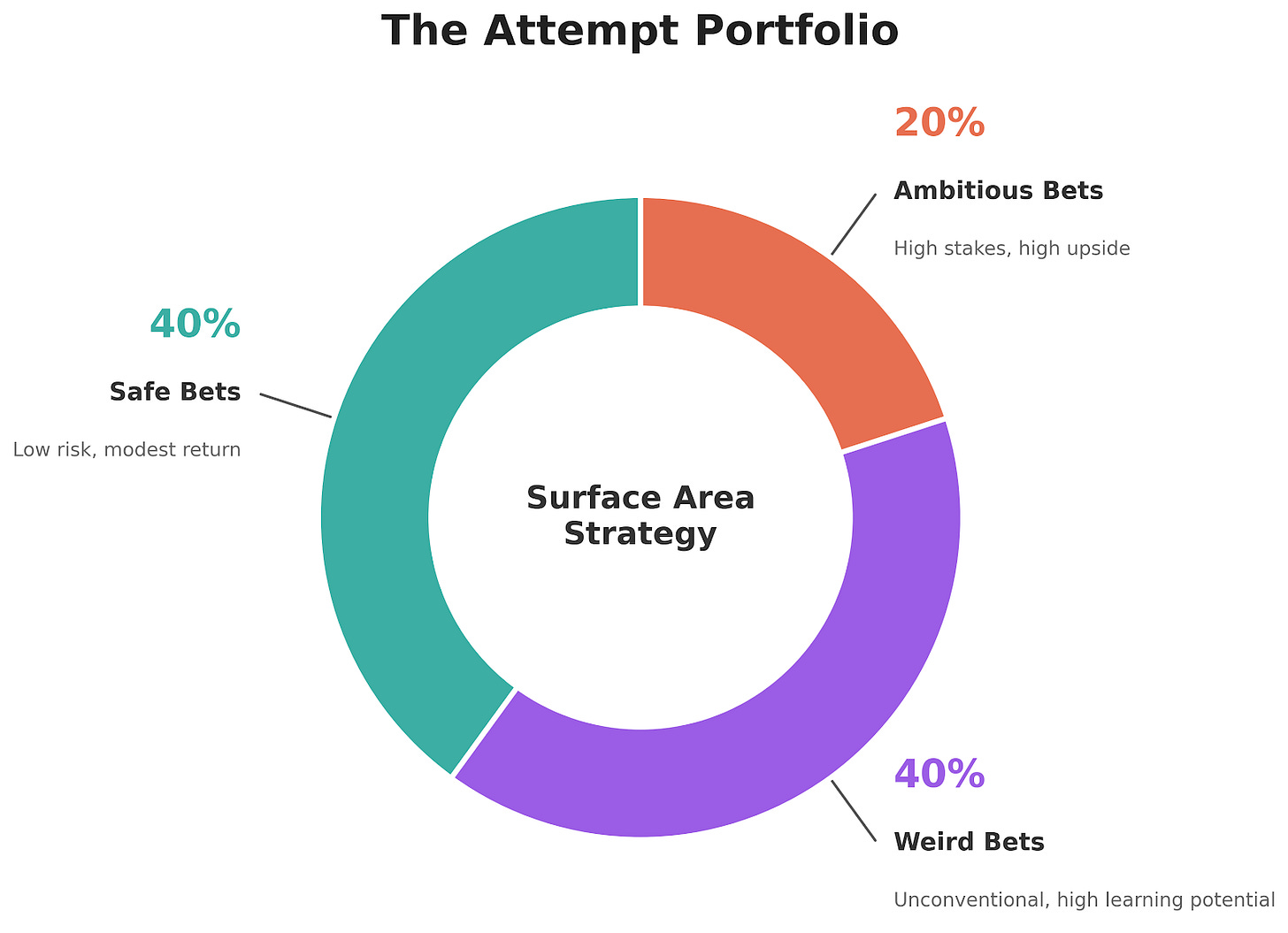

The Attempt Portfolio

If you want to operationalize Surface Area Strategy this week, build an Attempt Portfolio for any outcome you care about.

Define three tiers:

Safe bets (40%): Low cost, high probability of modest return.

Weird bets (40%): Unconventional approaches where most will fail but some will teach you something important.

Ambitious bets (20%): Higher stakes with higher potential return.

We landed on these ratios through trial and error: safe bets keep you moving, weird bets teach you something, ambitious bets occasionally rewrite the game

For each attempt, write:

Bet name (7 words or fewer)

Hypothesis: “If we do X, we expect Y.”

Steps: max 5 bullets

Signal: one primary metric

Kill criteria: the threshold that means “stop”

Time-to-signal: how long before you decide

Owner: who is accountable

The magic isn’t in any single row. The magic is having twelve rows instead of one, with clear rules for each.

The Surface Area Architect (Prompt)

Use this when you want to turn a vague goal into a week’s worth of managed surface area.

You are my "Surface Area Architect."

Objective: Turn ONE outcome into an experiment portfolio that increases surface area without creating chaos.

CONTEXT

- My role/situation: [paste]

- Outcome I want in the next 7 days: [paste]

- Constraints (time, risk, approvals, budget, brand, compliance): [paste]

- Audience/customer/stakeholder: [paste]

TASK

1) Generate 12 attempts (experiments) aimed at this outcome.

- 4 safe

- 4 weird

- 4 ambitious

Each attempt must be runnable in < 2 hours and should not require new approvals.

2) For each attempt, output a portfolio row with:

- Bet name (7 words or fewer)

- Hypothesis (one sentence)

- Steps (max 5 bullets)

- Signal (ONE primary metric)

- Kill criteria (a clear threshold)

- Cost (time estimate)

- Owner (me)

3) Add governance:

- Recommend a default "Time-to-signal" window for each attempt

- Identify 3 ways I might accidentally move the goalposts, and how to prevent each

OUTPUT FORMAT

A) A table with 12 rows (the portfolio)

B) A 7-day schedule that sequences the attempts (what to run on which day)

C) A 10-minute weekly review agenda (what to decide, what to kill, what to keep)

QUALITY CHECK

Before you finalize, verify:

- Every bet has a measurable signal and a real kill criterion

- At least 30% of bets are genuinely uncomfortable

- Nothing violates the stated constraints

We’ve used variations of this across multiple projects. Copy it, modify it, make it yours.

The Surface Area Scorecard

For executives and operators, track this weekly:

Attempts shipped: How many experiments actually went live.

Time-to-signal: How quickly you learn something decisive from each attempt.

Kill rate: How many bets you shut down.

Selection quality: Of surviving bets, what’s the hit rate after 2-4 weeks.

Throughput by lane: Are you experimenting across GTM, product, and ops, or just in one area.

Notice what’s missing: hours saved.

Time saved is not the goal. It’s a byproduct.

The Real Villain: Perfect-Shot Culture

Perfect-Shot Culture looks like professionalism.

It sounds like:

“We need alignment before we move forward.”

“We need a strategy before we start experimenting.”

“We need to identify the perfect use case.”

“We need to select the right tool first.”

You’re in Perfect-Shot Culture if:

Your last three AI conversations were about tool selection, not experimentation

Your team has discussed “the right use case” for more than two weeks

You have more strategy documents than shipped experiments

Sometimes those are real constraints. Resource allocation and coordination matter.

But most of the time, these phrases are a socially acceptable way to avoid taking shots in public.

And in the AI era, that’s fatal.

Because while you’re holding the ball looking for the perfect shot, other teams are taking 20 good shots and learning from each one.

Quibi had all the alignment in the world. $1.75 billion in resources. Two legendary operators. A meticulously planned launch.

Amazon has “disagree and commit.” A culture where the question isn’t “Is this the perfect idea?” but “Can we test this quickly and learn something?”

The game changed. Most people haven’t caught up.

The 7-Day Challenge

New year. New experiments.

If you want to test Surface Area Strategy without turning your life into a chaos experiment, try this:

Day 1: Pick one outcome you care about this week.

Day 2: Use the prompt above (or do it manually) to generate 10 attempts at that outcome: 4 safe, 4 weird, 2 ambitious.

Day 3-6: Run the attempts and track the signal metric for each.

Day 7: Spend 30 minutes answering:

Which attempts showed signal worth pursuing?

Which attempts should be killed immediately?

What did you learn that changes your next round?

Keep 1-2 winners. Kill the rest. Start again.

No heroics. No perfection. Just reps.

Start your 2026 that way.

The Lesson of the Three-Point Revolution

The lesson of the 2023 Heat isn’t “three is better than two.”

It’s that volume is not the enemy. Unmanaged volume is.

Miami went up 3-0 taking their shots. When variance swung against them and Boston tied it 3-3, they didn’t abandon their identity. They walked into TD Garden for Game 7 and shot 28 threes anyway.

The teams that win aren’t the ones who avoid high-variance shots.

They’re the ones who build a system that generates enough good looks that variance doesn’t decide their season. And when variance does swing against them, they trust the process and keep shooting.

That’s Surface Area Strategy.

More attempts. More signal. Better selection. Faster compounding.

Every week you spend in Perfect-Shot Culture, debating which single pilot to run, is a week your competitors are running ten experiments and compounding their learnings.

AI made attempts cheap. The game changed.

The question isn’t whether you’ll adapt.

The question is whether you’ll be leading that future, or chasing it.

What will you ship this week?

As for us, we’ve got a few experiments of our own about to go live. New communities. New tools. New formats. You’ll see them hit your inbox over the next week.

Here’s to more shots in 2026.

— Zain & Zain

P.S. The prompt in this article is really effective. We’ve used variations of it across multiple projects. Copy it, modify it, make it yours. Then reply and tell us what you built.

P.P.S. If you know someone stuck in Perfect-Shot Culture, forward this to them. They’re holding the ball. Help them start shooting.

That’s Surface Area Strategy.

More attempts. More signal. Better selection. Faster compounding.

-> a...nd with AI at each stage as some form of quality control to send feedback to the system on how to improve it can create such a powerful flywheel.

$100M idea sitting in "low priority" for six months because nobody could predict it would work... That's the tax of attempt-poverty right there. D: